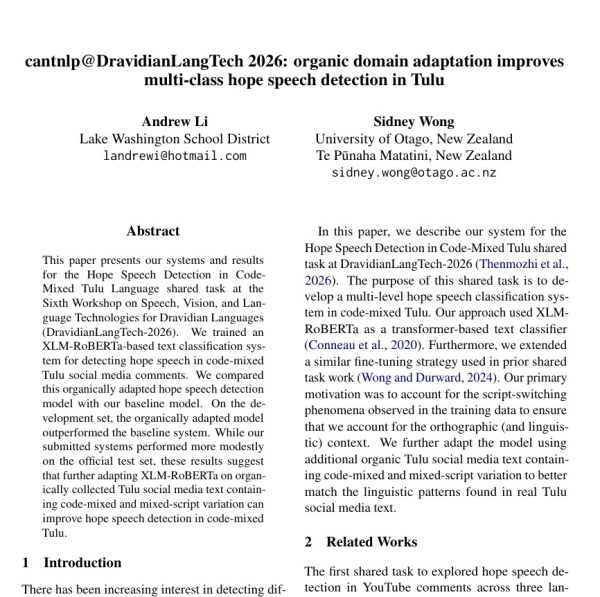

One of the things I like most about computational linguistics is that it constantly reminds me how much important language technology work is happening outside the most visible corners of NLP. Some of the most interesting problems are also some of the most challenging: low-resource languages, code-mixed data, and tasks where meaning depends heavily on social and linguistic context. That is part of why I am especially excited to share that our paper, cantnlp@DravidianLangTech 2026: organic domain adaptation improves multi-class hope speech detection in Tulu, has been accepted to the Sixth Workshop on Speech and Language Technologies for Dravidian Languages, held in conjunction with ACL 2026 in San Diego, California. ACL 2026 will take place from July 2 to July 7, 2026, and the workshop is part of the official ACL 2026 workshop program.

This paper was developed for the Hope Speech Detection shared task, which focuses on identifying encouraging, supportive, and positive content in code-mixed Tulu. I found the task compelling because it shifts attention toward a different kind of language technology question. A lot of NLP work on online spaces focuses on detecting harmful language, misinformation, or toxicity. Hope speech detection asks something different: how can we identify language that is constructive, affirming, and community-building? In low-resource and code-mixed settings, that becomes even harder, which is part of what made this project so interesting to me. The DravidianLangTech workshop itself is focused on advancing speech and language technologies for Dravidian languages, including multilingual and code-mixed contexts that are still underrepresented in mainstream NLP research.

In our paper, we explore how organic domain adaptation can improve multi-class hope speech detection performance in this setting. More broadly, the project pushed me to think carefully about what it means to build models that are not only technically effective but also responsive to linguistic variation and real community contexts. That balance is something I keep coming back to in computational linguistics: the best work is often not just about model performance, but about understanding the language setting well enough to ask the right question in the first place.

I’m also very grateful to be the first author on this paper. A huge thank you to my co-author and mentor, Dr. Sidney Wong, for his invaluable guidance, insight, and support throughout the project. I learned a great deal from working on this paper, not only about multilingual NLP and shared-task research, but also about how careful, iterative research gets done.

For me, this acceptance is exciting not just because it is another milestone, but because it reinforces something I have been learning again and again through research: some of the most meaningful problems in NLP are the ones that require patience, context, and attention to languages and communities that do not always receive enough focus. I’m very thankful for the opportunity to contribute, even in a small way, to that work.

— Andrew

5,633 hits